It's not all about the tools

It's about human goals and what the tools help you see.

I’ve been thinking a lot about tools and the impact the tools we choose have on what we create with them. It’s not a new idea. Edward Tufte made this point back in 2003 when he told us how PowerPoint influences our positions. It doesn’t just shape our output; it shapes our thinking. Our positions are clandestinely influenced by every template, every formatting default, and the constrictive dimensions of each slide. PowerPoint didn't just change how ideas were presented, it changed what ideas were considered presentable. This classic tale is getting a reboot with modernized characters following the same arc. Have you ever rounded the corners of an idea to make it fit within a slide? Or excised clarifying detail because the deck was getting long? If you have, like me, you’re familiar with the protagonists in this movie.

Set Dressing Vs. World Building

In the lineage of UX tools, from Photoshop to Figma, the final output is subject to a similar kind of influence. Here the incentive is not economy so much as efficiency and speed. As we rush to articulate how our work will be presented, we are encouraged to prioritize surface over substance. We squish content into existing components, labor over type hierarchies, experiment with fluid layouts, and wrestle with different corner radii. Prototypes simulate the appearance of a system without being governed by its data, performance constraints, or security requirements. Generated spaghetti code can be persuasive when it works.

While designs can be “interactive”, the resolution of the information they hold is only slightly higher than the static keyframes we once created in Photoshop. Like storefronts on a movie set, the output is artifice, a facsimile that imitates a space where commerce would occur. It’s a clever marriage of dummy data and placeholder text. FPO all the way down, precisely drawn and adaptive to the size of the window its displayed in. But like the blunt slides in PowerPoint, these artifacts still fail to capture the complexity of what we’re proposing. They’re still static frames in a larger story. They look real but they don’t actually work.

While our tools have changed with predictable reliability, the bias persists. We can add the gloss of realistic data or curated thumbnails, but the story is largely superficial because the machinery that enables it hasn’t been built yet. We haven’t cast the engineering team yet and the producers are still trying to secure funding.

The Surface Is Inhospitable

The story builds to a crescendo with the “harmony between hardware, software, and content” that is Apple’s Liquid Glass. This new design system creates a beautiful, technically sophisticated effect that also happens to burden users with low contrast content and taxes devices with the computational needs for rendering its theatrics. Even Apple, with an army of world-class designers and titanic piles of cash, has struggled with the details. When your UI renders startlingly convincing effects while also making it hard to resize a Finder window, we’re left to wonder if “It just works” is a motivating philosophy. The effects are dazzling, but what story are we trying to tell here?

A New Hero Emerges?

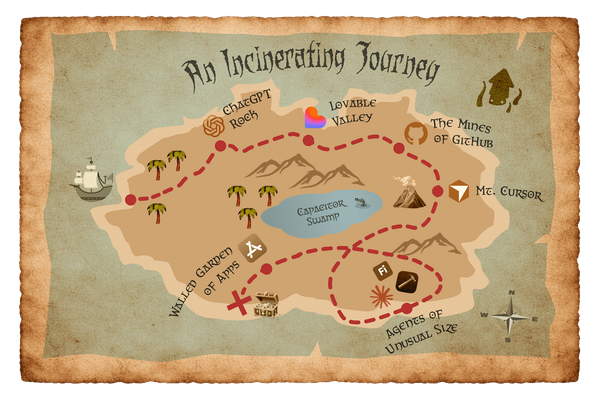

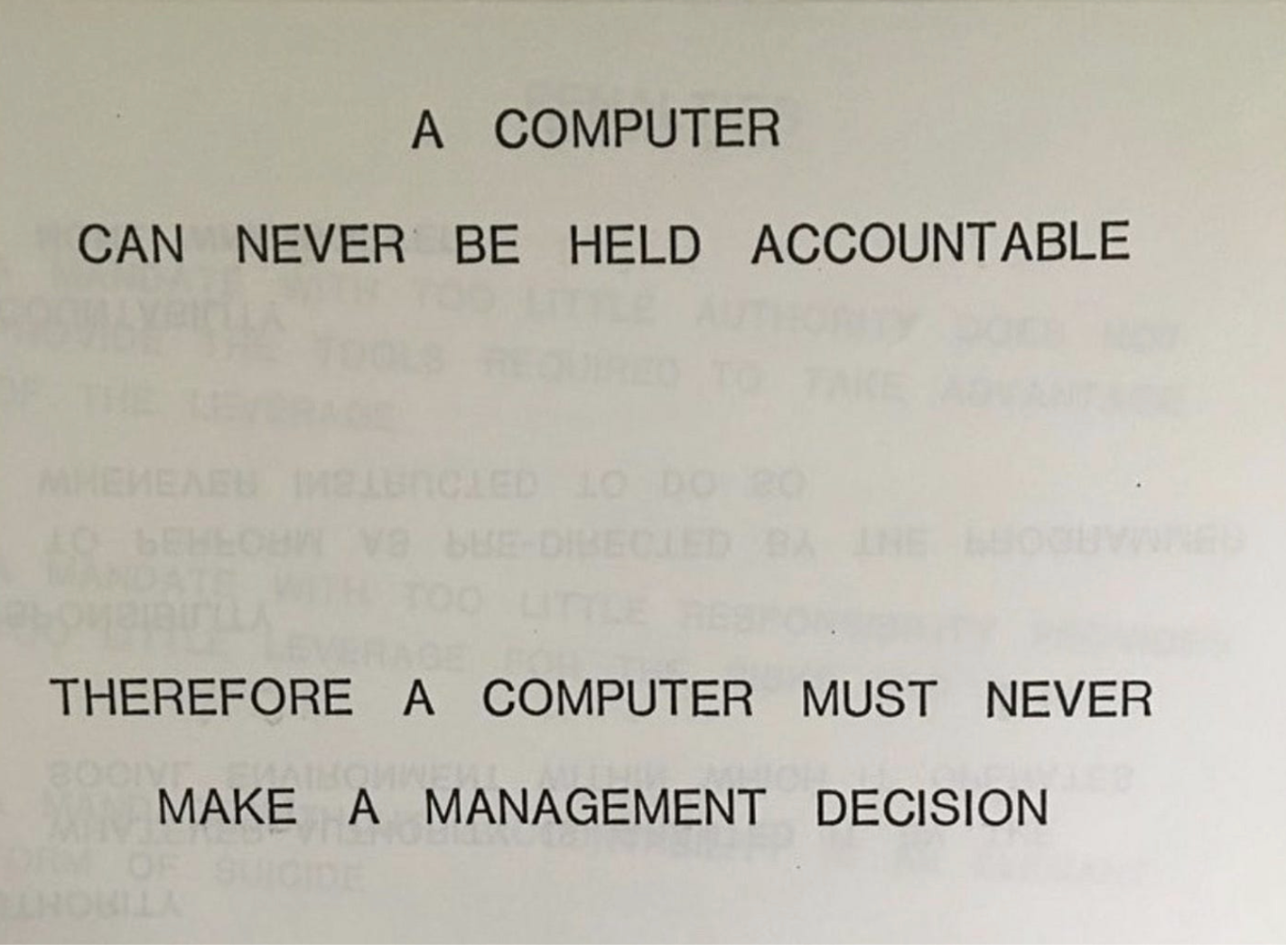

Now that AI tools have entered the frame, we could argue that a new hero is emerging. Even someone without technical expertise can summon databases, conjure APIs, and manifest a completely functional app. We can break free of the gravity of the surface with Claude Code or Codex! But these tools also bring us new influences to contend with. Every tool we’ve used in the past was deterministic — you decide what to do and it enables execution faithfully and repeatably. Our expectations, built over decades of computing, are that the machine does exactly what it's told, every time. That reliability is the foundation of the world where designers and PMs learned to work. Trust the outputs and iterate on decisions rather than execution.

The probabilistic output of LLMs breaks that contract fundamentally. The same prompt can produce different results. The agent, while superficially presenting as consistent, may be leading a team of designers down divergent paths. The tool can be confidently and convincingly wrong. “I fixed the issue.” “The application is now highly secure.” “The content meets a high standard of accessibility.” There is no reliable way to audit whether it did what you asked without independent expertise. But the tools also seduce us into thinking we don’t need anyone else.

Chat interfaces compound the issues. The blank text field does not reflect the constraints and editorial point of view would find in a traditional UI. The conversational exchange feels authoritative and relatable without the governance of domain expertise behind it. Layer in sycophancy and variable reward mechanics and the conditions for uncritical dependence are firmly in place.

Say it with me:

You Will Be Assimilated

As we assimilate AI tools, there will be a lot for us to unlearn because the behaviors that made us effective in the past become liabilities with LLMs. You simply can’t trust the output (as some unfortunate lawyers have learned) and validating the output is more complex and more costly. I burned hours and credits leaning on the agent to fix my app’s authentication issue and must take it on faith that it’s resolved and releasable. It sounded correct. The agent told me so without a hint of hesitation.

Vast new categories of errors will be embedded into the systems we depend on and we will have to sift through each individual frame to find them. They look correct so we will need to lean on hard-won expertise for detection. We can crank out new product with lightning speed but must maintain a firm grasp of the existing obstacles and user goals for it to have any usefulness. What use is creating the wrong thing faster? Iterating on our understanding of what is important, what creates value, and what will sustain a business rather needs more attention than the execution.

These are deeply entrenched habits built over a lifetime of working with deterministic systems. Unlearning them is not intuitive — it runs counter to everything computing has taught us about how machines behave. But superficial acceptance of every agent's confident misdiagnosis will lead us into confusion and conflict. In this world, computers can’t do math but trade on the authority of the machines that can. And it’s not likely things will get better anytime soon. Even OpenAI admitted hallucinations are mathematically inevitable. We’ll need to evolve because we'll be able to before LLMs will.

Please Hammer Don't Hurt 'Em

There is a very tangible burden when the tool is probabilistic and you lack the expertise to audit its output. As the Prime Mover of a native iOS app, I remain genuinely uncertain about its security, code quality, and reliability. That does not from carelessness but from the structural absence of anyone who knows enough to tell me when something is wrong. Asking “pretty please do not introduce a security vulnerability” in a prompt is simply not adequate. Without collaborators, critics, or domain experts, we put all our chips in with a probabilistic slot machine that exploits our very human vulnerabilities. That feels like the newest, darkest pattern to me. The tool amplifies velocity but cannot compensate for gaps in vision it cannot see and will not admit to. This is cognitive surrender and we end up accepting a reality where computers are not bicycles for the mind but instead surrogates for it.

King Of The Wild Frontier

When we see the claim that “AI will not take your job, but someone who understands how to use AI will”, understanding these shifts is what they’re talking about. Anyone can coaxe a website out of Claude Code, just like any small child can quickly understand how to use an iPad. But we should not simply be subordinate operators of a tool. Purpose and direction should come from us.

The shift from deterministic outputs to probabilistic ones is not just a technical change. It is a fundamental shift in our relationship with the tools themselves. Every previous tool in this lineage rewarded individual mastery, but LLMs make rugged individualism a liability. Collaboration and domain expertise are more important now, not less. We’ll need to figure out how to ensure the new velocity AI tools enable leaves enough space for human expertise to exert its influence and shape the experience. If we’re going to continue to be human-centered, we’ll need the process that is grounded in an understanding of human problems. We’ll need to talk to actual people, both users and practitioners. Waiting until the end for a knowledgeable gatekeeper to wave things through would continue the deference to machines that has already started.