Collaboration and Expertise Are Signal, Not Noise

Last week, four astronauts splashed down in the Pacific after traveling farther from Earth than any humans have in more than fifty years. The spacecraft Integrity was built with the combined expertise of thousands of engineers, scientists, and mission crew. This product of a sustained international collaboration carried them around the Moon and back. While there has been substantial fanfare since the astronauts’ return, literally no one has suggested that the most efficient path to the Moon would have been to remove the humans from the equation.

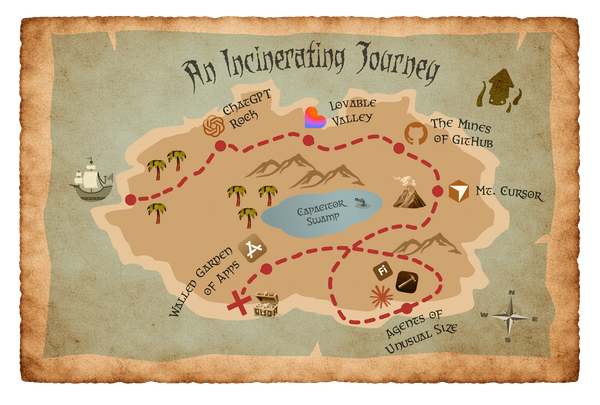

In my first two posts I described building a native iOS app alone, using AI tools I didn't fully trust, and argued that the probabilistic nature of those tools makes individual efforts a liability in ways that deterministic tools have not. Today I look at Artemis II as a clear counterargument to the idea that collaboration and domain expertise are inefficiencies to be optimized away and that the echo chamber of sole authorship is a structural feature of how these tools are designed. The sense of inspiration and pride that tens of thousands of people felt watching the splashdown of Integrity is a signal worth paying attention to as we continue to assess and assimilate AI in our own lives.

Code that's internally consistent but not true

Recently Dorian Taylor made the observation that “code only has to be internally consistent: it (mostly) doesn’t have to reference facts about the world.” I’ve experienced that with Eye•Full. The app understands itself and how it is intended to work. It knows the difference between a “description card” and a “flip card”, core concepts I established for the initial prototype. Its code compiles, the logic holds, the tests pass – but there is nothing in place to test my assumptions or check to see if it is solving a meaningful problem. It feels right because nothing within that system contradicts it. I could have made a doomsday clock or a field guide for identifying aliens that would have been just as coherent and equally flawed.

This barrier to outside influences can also be isolating. Solitary vibe coders are cut off from external elements that might introduce friction, force us to polish rough edges, or question our assumptions. There is little in place to motivate a shift in direction other than gut instinct or personal preferences. Consequently, we’re in a space where coherent wrongness is indistinguishable from coherent rightness until it is subject to scrutiny and the known truths of reality. Add in the obsequious enthusiasm and bottomless praise you get from an LLM and you end up with mechanics that are not dissimilar to a cult. If you’re vibe coding a mindfulness app no one asked for (ahem), the consequences are forgivingly small. But if you’re funding a new location-based health app with your kid’s college fund, the risks increase spectacularly.

The "no colleagues" provocation

Pavel Samsonov’s observations give this sense of isolation another dimension as he looks at how these tools affect our social relationships and how designers can anticipate its effect on their roles in these systems:

“The design process — hell, the entire product delivery lifecycle — is a structure that manages relationships of service between parties. The entire thing is governed by formal and informal agreements of exchange.

Or, it used to be. Because one of the highest-profile promises of the so-called AI era is that service relationships are for dopes, and you can opt out of them. People can be replaced entirely, or at least pushed to arm’s reach, by agents who will perform the same tasks for you…The value proposition of AI tools is not “more productive colleagues” but “no colleagues at all.”

But if we choose frictionless individual productivity over the inconvenience of outside expertise and objections, we choose something anti-human in the name of efficiency.

The resistance built into collaboration is not a bug to be triaged. When someone tells you “that’s not how people actually use it”, or “that API has a known security issue” you’re encountering the natural force that helps ensure you are building the right thing, that it will function predictably and effectively, that it can be maintained, and that it can be adapted as we continue to learn in the future. It may write code faster, but shipping code quickly is subordinate to larger questions about who benefits from it, what problems will it solve, and how can it be misused? As Matthias Ott has written: “friction isn’t the enemy of good work. Friction is where good work gets its shape.” We must choose human impacts over machine outputs. Short circuiting the process by removing the scrutiny doesn’t get you better software. It gets you slop and a surface area you don’t understand.

A product that ships without being tested against real user needs, reviewed by domain experts, or challenged by people with different perspectives is not a faster version of a good product. It is a different kind of product whose flaws are invisible until they meet the people it was supposed to serve. Maybe you can get away with it when the stakes are acceptably low. But when the task is mind-bendingly complex, like a journey to the Moon, it’s a decade of sustained effort from expert humans that we trust for the job.

What expertise actually does that AI cannot

We can look to Eye•Full for another real-world example of how my lack of expertise led to unintended consequences. The app has a feature where the background slowly morphs. I wanted to reinforce the theme of the app with something you would only notice if you were paying careful attention to the details. After a few rounds of iteration and tweaks to the animation, the AI provided exactly the gentle, meandering effect that I imagined. What it didn’t account for was the complexity of the gradient and the consequences of the 60fps frame rate. After a minute or two in the app, my phone would heat up and the battery would deplete. An expert might have considered the intersection of performance, battery life, and processing load and recommended a compromise that balanced my design goal with the hardware limitations. They would have anticipated that choosing between battery life and nearly invisible garnish would not be a controversial decision for people to make. The AI, in this case, only made a recommendation after I asked it why my phone was getting hot. Raise the stakes even a little and it’s not hard to imagine more significant consequences.

AI may be able to string together functional code, but that output is difficult to connect to actual expertise. Providers are likely working through this limitation, and it may improve in the future. But for now, if you need pattern recognition beyond the confines of your context window, you probably need an expert. If you need to ensure your users’ health data is encrypted at rest and in transit, you probably need an expert. That person will be able to anticipate and avoid issues and make decisions that help mitigate risks for you or your organization. An expert thinks broadly and creatively about how to act in service of the project goals and factors in a lifetime of hard won experience in the process. They can anticipate the obstacles an AI might only recognize in hindsight.

Bring your own values

The argument about the values of technology is an old one; I believe technology reflects, embeds, and amplifies human values in a way that shapes human behavior. The VCR didn’t strangle Hollywood like Jack Valenti insisted it would. Tim Berners-Lee imagined an Internet that connected all human knowledge and opened a door for disinformation, disruption, and abuse. The cellphone was going to liberate us from our desks and now most of us have trouble setting them down. Each of these technologies delivered on its promise and enabled harms its advocates didn't anticipate, and its critics didn't fully imagine. The pattern is consistent: the technology arrives values-neutral and gets imbued with the frailties, biases, and incentive structures of the ones who build and deploy it.

LLMs are no different. They are trained on the accumulated record of human knowledge and human failure in roughly equal measure. The superhuman capabilities they promise are built on the same prejudices, blind spots, and motivated reasoning that produced the training data. There is no version of AI that arrives pre-equipped with good values. There is only the version we build, and the values we bring to the building.

The personal software moment is real. Eye•Full, Commutely, and a growing list of others are evidence of it. But it’s unclear if the apps Nilay Patel described as “too small and too pointless for anyone else to make" will produce genuine value or just accelerate fragmentation. What happens when we have a dense constellation of personal projects designed in isolation where interoperability is not guaranteed? How will other people react to an abundance of idiosyncratic tools solving problems in ways they don’t understand or that don’t align with the workflows they prefer? The same logic of independent teams with independent deployment led us to microservices. But that didn’t make the friction go away, it just became harder to see. Success here will depend entirely on whether practitioners bring judgment, collaboration, and domain expertise to what they build. The technology won't supply those things. It will reflect whatever it's given. If it's given velocity without values, it will prioritize velocity over everything else. If it's given the accumulated expertise of people who understand their users, their domain, and the ways things go wrong, it can produce something worth having.

Look to the stars

The Artemis crew didn't fly to the Moon alone and they didn't fly without values. The mission was called Integrity for a reason. Thousands of people spent careers understanding how things go wrong in deep space so that four humans could travel farther from Earth than anyone in more than half a century and come home safely. That is not an argument against technology. It is an argument for what technology looks like when humans decide the endeavor is worth doing well.

Practitioners face exactly that choice now. AI tools will fulfill the role we provide for it in the products we build, the processes we design, and the values we either deliberately preserve or quietly abandon in the pursuit of velocity. Collaboration and domain expertise are not inefficiencies. They are how the values get in. They are how the work stays accountable to the people it's supposed to serve. They are how we remain the authors of what we're building rather than its operators.

We have seen enough of what happens when technology gets deployed without either expertise or an understanding of human needs. The Artemis crew, and everyone who made that mission possible, have shown us what human expertise and vision look like when they're treated as irreplaceable rather than optimizable.

We can start by making deliberate collaboration practices and expert consultation prerequisites in our new workflows that involve AI. We can pierce the insular logic of AI output with steps where inquiry and feedback involve actual people. That may sound expensive, but what is more costly than building the wrong thing? We are capable of excellence when we tap into the expansive universe of human expertise, and we shouldn’t accept anything less.