Oh No, Not Again

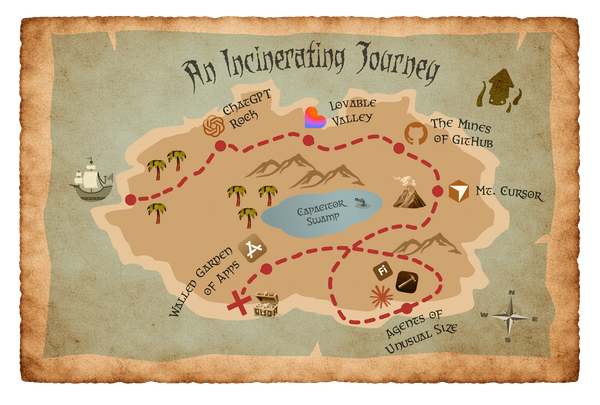

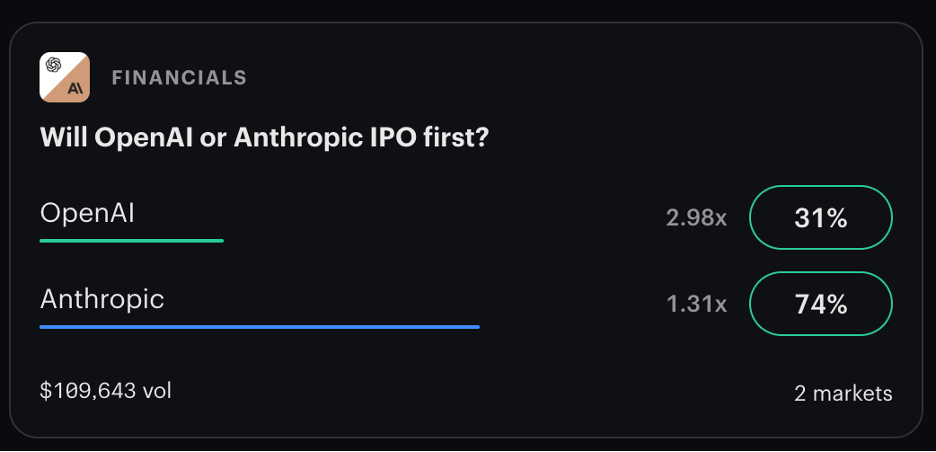

I want to switch gears and talk more explicitly about costs, the slippery details around how much a token is worth, and what we might look forward to as major providers like OpenAI and Anthropic get ready for their IPOs that are expected this year.

To do that, I want to go all the way back to 2016, the mania around ridesharing apps, and a story by Ben Gilbert in Geekwire where he described the experience of selling his car and going “full Uber”. He had crunched the numbers and concluded that car ownership was costing him $4000 per year. He had identified other tangible benefits as well: never worry about parking, drinking and driving is impossible, transit time can be reallocated to being more productive or invested in an undistracted conversation. I can see how the cost-benefit analysis would pencil out when an Uber ride to downtown Seattle cost 6 bucks.

Even then Ben knew that these perks were enabled by vast amounts of investor money and driven by incentives to get customers to adopt these services in their regular routines and gave a nod to the potential of autonomous vehicles to help make this car-less lifestyle viable, at least in the city.

Fast forward a few years and we see that autonomous is still in its infancy, that Uber rides have gone up 92 percent between 2018 and 2021, and that the providers are taking a bigger share of the revenue it used to give to drivers. Reportedly, Uber rides in Seattle cost more than in the rest of the US. A 30-minute ride will cost you around $60. With IPOs completed, regulatory battles largely settled, and an established behavioral shift away from taxis and public transit, the rideshare companies have concentrated on profitability. Going full Uber now seems like a more treacherous proposition.

While the ridesharing honeymoon may be over, folks are still swooning over AI. I’ve seen a fair amount of critique around energy usage and water consumption, but I have seen much less on the real cost of tokens. How much each unit of data costs is still very opaque, although there is some speculation that API usage may be 90% subsidized. In my own project, these artificially low costs have left me little reason to govern my usage. I prompt. I fail. I prompt again. The cost of iteration and error recovery is comparatively trivial, so I don’t have to worry about efficient, optimized workflows.

But there have been signals that this incentive structure won’t be in place forever. We can see the shifts taking place now. Anthropic raised the price for subscribers using OpenClaw. Fidji Simo shut down OpenAI’s short form video app Sora and led a strategic shift away from “side quests”. If past is prologue, the incentives will remain for as long as it takes to drive adoption and shift behaviors (or until the money runs out). Microsoft just tightened rate limits and plans to move users to token/API-based billing later this year. What’s the price? Price is, price is going up. But what happens when your subscription costs go up 90% or more? What happens when you hit a usage quota, but the job’s not done and you need to score some more tokens? What happens when your infrastructure and your workflows are so integrated with AI and agentic processes that disentangling from an LLM provider means starting over from scratch? It’s hard to talk about the cost of dependency when the costs themselves are so opaque.

We may get lofty rhetoric from Sam Altman or Dario Amodei, but there are risks if we put too much trust in their public statements about “changing the world” and “empowering users”. The reality does not seem that much different from the ridesharing maneuvers that preceded all this. Anthropic “is loosening its core safety principle in response to competition.” The previous policy required pausing development if capabilities outstripped safety controls, but that has been removed. OpenAI is “running at lightning speed” to build their advertising business. These are just small reminders of what we know to be true: investors will want returns on their investments (some more than others). The pull quotes will tell us a promising story of unlocked potential, but the reality is that these men will be burdened by monumental financial pressures driven by contract pressure, competitive pressure, and IPO pressure. It doesn’t seem unreasonable to expect these converging forces to harden each company’s softer ideals.

It’s also easy to center Altman and Amodei in these conversations, but the AI space is a complex system with many influential players and high stakes. Picking “the good one” may not be as influential as you hope. While Dario Amodei opposed the 10-year moratorium on AI regulation, those goals are still being pursued by the Trump administration, Google, Meta, and others. The reality is that there is still no comprehensive federal legislation despite the position of a major player. These tech leaders are not part of an ordered coalition; it’s a chaotic land grab. Prices and policies will be influenced by capitalism and not by altruistic devotion to human progress.

The problem is that the AI project, more than others that preceded it, risks degrading the humans themselves. With cognitive surrender, professional and social isolation, and deskilling of experts, the darkest AI timeline may not place much value on a user’s will at all. That might help explain why 50% of Americans are concerned about the increased use of AI in their daily lives. AI is not just creating behavioral changes; it’s creating cognitive ones. As individuals we might be able to adopt forms of resistance by avoiding AI tools and enduring cognitive struggle or by devoting ourselves to sustained collaboration with humans in other disciplines. But individual actions are no remedy for systemic problems. Communities like Monterey Park are fighting off the infrastructure, but there are more than 1,500 new data centers in development nationwide (mostly in rural areas). Even collective actions may not be sufficient to stall progress.

I don’t really have any answers. All I can offer is cope. We know the honeymoon will end so we should take this time to build resiliency into our practice and into the cognitive habits our practice depends on. Find opportunities to insert human expertise to avoid costly iteration with an agent. Develop workflows that are provider agnostic or that leverage multiple providers based on their unique strengths. And slow down. Take the time to research where you can remove pain, remove friction, and fill meaningful gaps. Build the right thing faster instead of using AI to crank out 10x the number of features. Leverage AI in service of human goals and solutions to human problems. If we lose sight of that orientation, we abandon a lot more than revenue. We lose a bit of our humanity. That doesn’t sound very empowering to me.